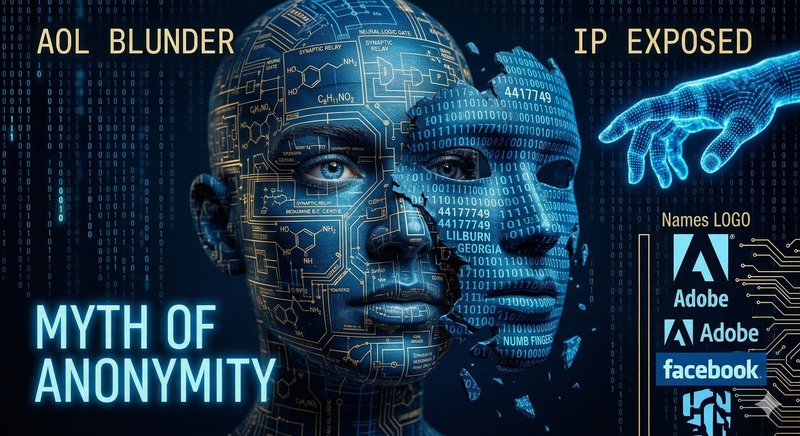

The Anonymization Risk: The AOL Blunder That Intellectual Property Always Forgets

Publicado el 19 March 2026

Discover why deleting your name from a document doesn't protect your secrets, and how true patent protection demands total data eradication.

The False Promise of the "Anonymous User"

At the dawn of the modern internet, the technology industry established a highly convenient, yet deeply dangerous, commercial premise. They argued that if massive batches of data were stripped of specific names, email addresses, or direct identifying numbers, all that information instantly lost its danger. They believed that by merely erasing a person's name tag, their data magically transformed into an "anonymous" and harmless mass.

This conceptual house of cards collapsed abruptly and publicly in August 2006, during a colossal and now emblematic blunder spearheaded by the telecommunications giant, AOL. Seeking to bolster its analytical endeavors and contribute to network research advancement, the company proudly decided to publish a massive log encompassing 20 million search queries conducted by hundreds of thousands of its users. To "protect" them, AOL replaced people's real names with random numbers.

AOL was thoroughly convinced that its database was completely secure because it was "anonymous." They were tragically wrong.

The Anatomy of an Unmasking

Within a matter of days, data analysts, academics, and journalists from The New York Times shattered the myth of "anonymized analysis." What AOL failed to understand is that you are what you search for, what you say, and how you say it.

By analyzing the search history of one specific user—the famous "User No. 4417749"—journalists didn't need her name to know exactly who she was. Her searches included phrases like "numb fingers," "60 year old single men," and highly specific queries about landscaping in a small Georgia town called Lilburn. By correlating these seemingly random hobbies, medical diagnostic questions, and geographical footprints, statistical profiling managed to outline an unmistakable identity.

In less than a week, journalists knocked on the door of Thelma Arnold, a 62-year-old widow in Lilburn. She confirmed that all those searches were hers. The case globally demonstrated an unbreakable rule of data science: Group anonymization within extensive discourse has never been real. Every textual trace harbors the constituent puzzle pieces of your identity; modern algorithms simply need to interconnect those patterns to put your first and last name back onto the information.

Anonymized Texts in the Era of AI Transcription

Fast forward to the present day. Sectors that develop a critical volume of Intellectual Property (IP)—such as pharmaceutical labs, software engineering firms, or corporate law practices—are blindly lowering their preventative guard, making exactly the same mistake as AOL, but on a vastly more destructive scale.

Daily, cutting-edge companies surrender hours of confidential meeting recordings, methodological developments, and brainstorming sessions to commercial Artificial Intelligence (AI) transcription clouds. When cybersecurity teams or internal counsel raise the alarm, the primary excuse from executives naïvely leans on fallible commercial promises: "It doesn't matter that this provider retains our audio and text on their servers, because in their terms and conditions they promise to store our drafts anonymously, unlinking our brand."

Considering the crucial discoveries that structure your Intellectual Property, assuming that removing your company logo from a text makes it safe is corporate suicide.

The exact moment you record your technical meeting addressing the formula for a new synthetic polymer, or dictate the revolutionary architecture of a financial App, your specialized textual jargon is your fingerprint. Exempting your "tag or brand" is entirely useless if the external AI quietly retains copies to feed its own foundational models. The AI will learn the precise way your company structures methodologies and solves intricate problems. Your own "masked" information will nourish an algorithm that, tomorrow, will regurgitate your proprietary resolutions when your direct competitors ask it similar questions. You have just given away your patent for free.

Assuming an algorithm cannot figure out who you are just because you deleted your name is to ignore the entire history of the internet. Imposing the absolute disintegration of your data post-processing is the sole appropriate posture in the face of modern corporate espionage.

Source: The New York Times, "A Face Is Exposed for AOL Searcher No. 4417749" (August 2006).

Get Started Now

Join our platform now and start transcribing securely.